There are dozens of ways to improve campaign performance. Everything from the color on a call to action button to testing a new platform can give you better results.

But that doesn’t mean every UA optimization tactic you’ll run across is worth doing.

This is especially true if you’ve got limited resources. If you’re on a small team, or you’ve got budget constraints or time constraints, those limitations will preclude you from trying every optimization trick in the book.

Even if you’re the exception, and you’ve got all the resources you need, there’s always the issue of focus.

Focus may actually be our most precious commodity. Amid all the noise of day-to-day campaign management, choosing the right thing to focus on makes all the difference. There’s no point in clogging up your to-do list with optimization tactics that won’t make a significant difference.

Fortunately, it’s not hard to see which areas of focus are worthwhile. After managing over $3 billion in ad spend, we’ve seen what really makes a difference, and what doesn’t. And these are, irrefutably, the three biggest drivers of UA campaign performance right now:

- Creative optimization

- Budget

- Targeting

Get those three things dialed in, and all the other incremental little optimization tricks won’t matter nearly as much. Once creative, targeting, and budget are working and aligned, your campaigns’ ROAS will be healthy enough that you won’t have to chase after every optimization technique you hear about for barely-noticeable improvements.

Let’s start with the biggest game-changer:

#1 Creative Optimization

Creative optimization is hands down the most effective way to boost ROAS. Period. It crushes any other optimization strategy, and honestly, we see it delivering better results than any other business activity in any other department.

But we’re not talking about just running a few split-tests. To be effective, creative optimization has to be strategic, efficient, and ongoing.

We’ve developed an entire methodology around creative optimization called Quantitative Creative Testing. The fundamentals of it are:

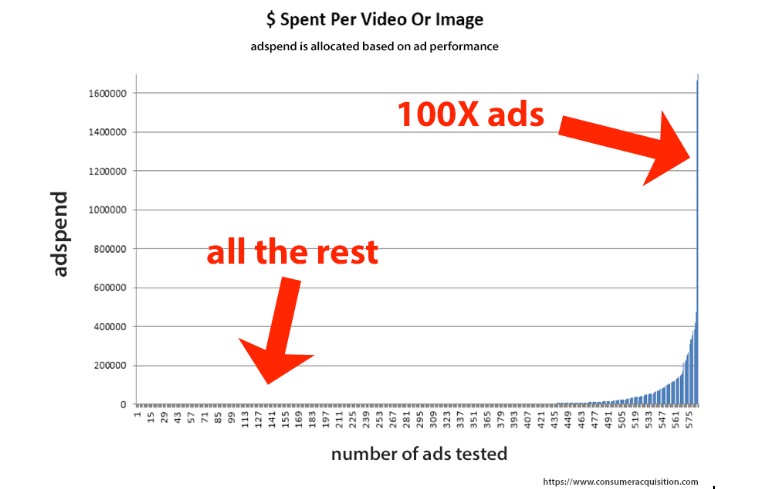

- Only a tiny percentage of the ads you create ever perform.

- Usually, only 5% of ads ever actually beat control. But that’s what you need, isn’t it – not just another ad, but an ad good enough to run, and to run profitably. The performance gap between winners and losers is massive, as you can see below. The chart shows ad spend variations across 600 different pieces of creatives, and we allocate spend strictly on performance. Only a handful of those 600 ads really performed.

We develop and test two core types of creative: Concepts and Variations.

80% of what we test is a variation on a winning ad. This gives us incremental wins while allowing us to minimize losses. But we also test concepts – big, bold new ideas – 20% of the time. Concepts often tank, but occasionally they do perform. Then sometimes, they get breakout results that reinvent our creative approach for months. The scale of those wins justifies the losses.

We don’t play by the standard rules of statistical significance in A/B testing.

In classic A/B testing, you need about a 90-95% confidence level to achieve statistical significance. But (and this is critical), typical testing looks for tiny, incremental gains, like even a 3% lift.

We don’t test for 3% lifts. We’re looking for at least a 20% lift or better. Because we’re looking for an improvement that big, and because of the way statistics works, we can run tests for much less time than in traditional a/b testing would require.

This approach saves our clients a ton of money and gets us actionable results far faster. That, in turn, allows us to iterate far more rapidly than our competitors. We can optimize creative in dramatically less time and with less money than traditional, old-school a/b testing would allow.

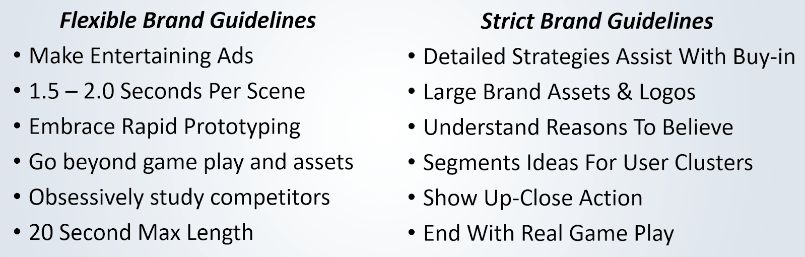

We ask our clients to be flexible about brand guidelines.

Branding is critical. We get it. But sometimes brand requirements stifle performance. So, we test. The tests we run that bend brand compliance guidelines don’t run long, so very few people see them, and so there’s minimal damage to brand consistency. We also do everything possible to adjust creative as quickly as possible, so it complies with brand guidelines while still preserving performance.

Those are the key points of our current methodology around creative testing. Our approach is constantly evolving – we test and challenge our testing methodology almost as much as the creative we run through it.

Why It’s Time to Rethink Creative as the Primary Driver of Campaign Performance

Naming creative as the #1 way to improve performance is unconventional in UA and digital advertising, at least among people who have been doing it for a while.

For years, when a UA manager used the word optimization, they meant making changes to budget allocations and audience targeting. Due to the limits of the technology we’ve had up until fairly recently, we simply didn’t get PPC campaign performance data fast enough to act on it and make a difference during a campaign.

Those days are over. Now, we get real-time or nearly real-time performance data from campaigns. And every micron of performance you can squeeze out of a campaign matter. This is especially true in an increasingly mobile-centric ad environment, where smaller screens mean there isn’t enough room for four ads; there’s only room for one.

So, while targeting and budget manipulations are powerful ways to improve performance (and you need to use with creative testing), we know creative testing beats the pants off both of them.

Google itself has acknowledged this, citing a study that found “on average, media placements only account for about 30% of a brand campaign’s success while the creative drives 70%.”

But that’s not the only reason to get laser-focused about optimizing creative. Possibly, the best reason to focus on creative is because the two other legs of the UA stool – budget and targeting – are becoming increasingly automated. The algorithms at Google Ads and Facebook have taken over much of what used to be a UA manager’s daily tasks.

This has several powerful consequences, including that it levels the playing field to a large extent. So, any UA manager who had been getting an advantage thanks to third-party ad tech is basically out of luck. Their competitors now have access to the same tools.

That means more competition, but more importantly, it means we’re shifting towards a world where creative is the only real competitive advantage left.

All that said, there are still significant performance wins to be had with better targeting and budgeting. They may not have the same potential impact as creative, but they have to be dialed in or your creative won’t perform like it could.

#2 Targeting

Once you find the right person to advertise to, and half the battle is won. And thanks to fantastic tools like lookalike audiences (now available from both Facebook and Google), we can do incredibly detailed audience segmentation. We can break audiences out by:

- “Stacking” or combining lookalike audiences

- Isolating by country

- “Nesting” audiences, where we take a 2% audience, identify the 1% members inside of it, then subtract the 1%ers out so we’re left with a pure 2% audience

These sorts of super-targeting audiences allow us to optimize performance at a level most other advertisers can’t do, but it also allows us to avoid audience fatigue for far longer than we would otherwise be able to do. It’s an essential tool for maximum performance.

We do so much audience segmentation and targeting work that we build a tool to make it easier. Audience Builder Express lets us create hundreds of lookalike audiences with ridiculously granular targeting in seconds. It also allows us to alter the value of certain audiences just enough so that Facebook can better target the super-high value prospects.

While all this aggressive audience targeting helps performance, it has one other benefit: It lets us keep creative alive and performing well for much longer than without our advanced targeting. The longer we can keep creative alive and performing well, the better.

#3 Budgeting

We’ve come a long way from bid edits at the ad set or the keyword level. With PPC campaign budget optimization, AEO bidding, value bidding, and other tools, now we can simply tell the algorithm which types of conversions we want, and it will go get them for us.

There is still an art to budgeting, though. Per Facebook’s Structure for Scale best practices, while UA managers do need to step back from close control of their budgets, they do have one level of control left. That’s to shift which phase of the purchase cycle they want to target.

So if they, say, a UA manager needed to get more conversions so the Facebook algorithm could perform better, they can move the event they’re optimizing for closer to the top of the funnel – to app installs, for example. Then, as the data accrue and they have enough conversions to ask for a more specific, less frequent event (like in-app purchases), then they can change their conversion event target to something more valuable.

This is still budgeting, in the sense that it’s managing spending, but it’s managing spend at a strategic level. But now that the algorithms run so much of this side of UA management, we humans are left to figure out the strategy, not individual bids.

UA Performance is a Three-Legged Stool

Each of these primary drivers is critical to campaign performance, but it’s not until you use them in concert that they really start to stoke ROAS. They are all part of the proverbial three-legged stool. Ignore one, and suddenly the other two won’t hold you up.

This is a big part of the art of campaign management right now – bringing creative, targeting, and budgeting together in just the right way. The exact execution of this varies from industry to industry, client to client, and even week to week. But that’s the challenge of great user acquisition management right now. For some of us, it’s a lot of fun.